Overcoming Digital Marketing Challenges: Lessons from Industry Experts

When HubSpot surveyed more than 1,500 marketers for its 2026 State of Marketing report, the single most-cited challenge was not creativity, not budget, not even talent. It was measuring marketing ROI — flagged by 33% of respondents, ahead of keeping pace with platform changes (29.8%) and lead generation (29.6%). That ranking is the giveaway. The hardest part of digital marketing in 2026 is not running campaigns; it is proving what they did.

This article walks through the eight digital marketing challenges that actually matter this year, the assumptions buried in each one, and a cleaner read of the data underneath. I will name tools and attribution models specifically. I will flag the difference between a number that is correct and a number that is useful. And I will close with one measurement change you can ship this Monday — not a six-month roadmap.

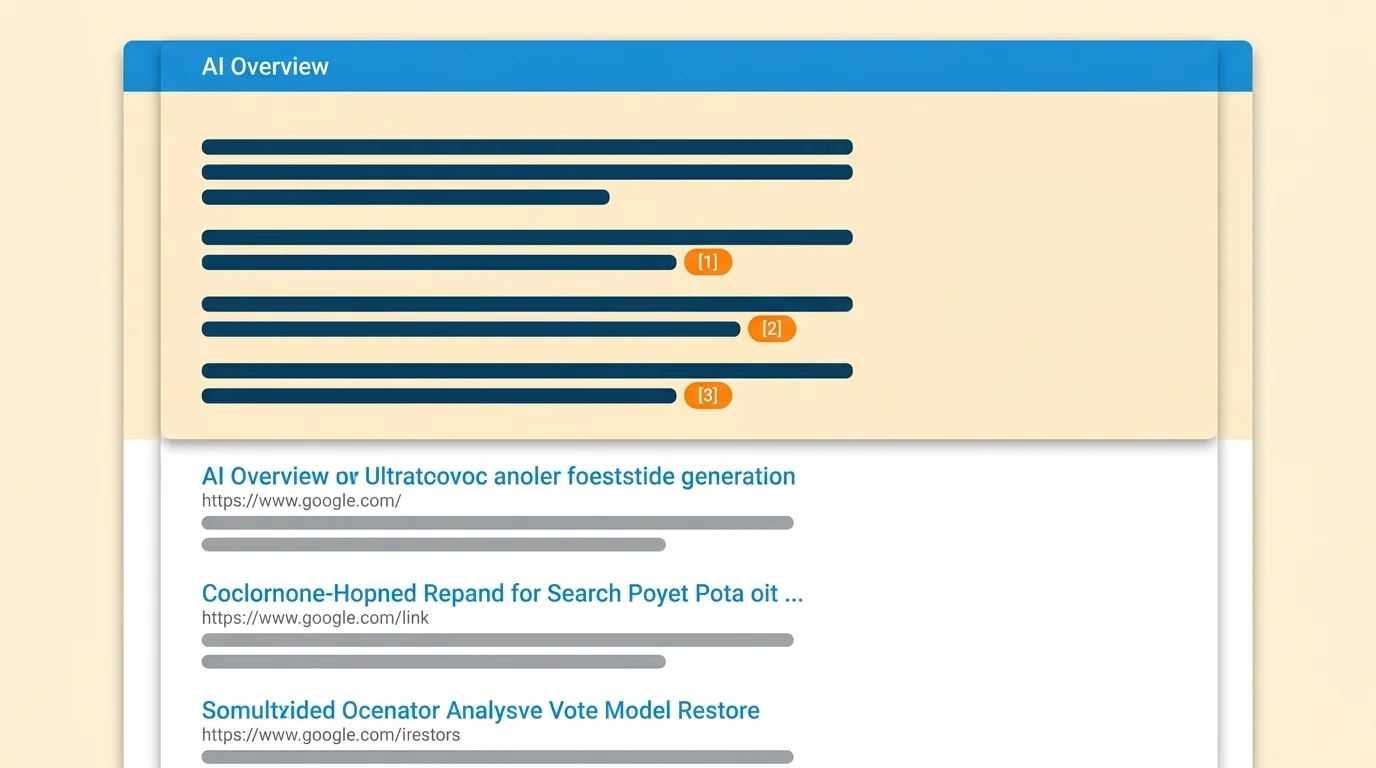

Challenge 1: AI Overviews and the Zero-Click SERP

The biggest 2026 SERP story is not an algorithm update. It is that Google has quietly redrawn the contract between publishers and traffic. AI Overviews now appear on 99.9% of informational keywords, and on those queries, organic CTR fell from 1.76% to 0.61% — a 61% drop. The same source pegs the average zero-click rate on AIO-triggered queries at 83%, against roughly 60% on traditional queries. Search Engine Journal reports that only 1% of users click sources cited inside an AI Overview. The AdExchanger review of the post-AIO publisher landscape puts year-over-year traffic losses in a 20% to 90% range, depending on vertical.

The vertical asymmetry is worth pausing on, because it changes what your dashboard is allowed to claim. AIO share is highest in Science (43.6%), Health (43.0%), and Pets (36.8%), and lowest in Shopping (3.2%), Real Estate (5.8%), and News (15.1%). If you are a Shopify operator looking at organic-traffic dashboards and panicking about a 70% drop, your category is probably not the one that should be panicking yet. If you publish health or science content, the floor has already moved.

The cleaner read of "organic search is dying" is this: organic search is being reallocated. The traffic does not vanish; it gets absorbed by the AIO answer panel and a small number of cited sources. HubSpot's 2026 survey separately notes that 40.6% of marketers now cite "updating SEO for search changes" as a top trend, and 50% of consumers report using AI-powered search. The win condition has shifted from ranking #1 to being the cited source inside the AIO box.

What that looks like operationally: structured FAQ blocks that mirror real PAA queries, a clear primary-claim sentence inside the first 100 words of every page, and explicit answer scoping ("In 2026, the X is Y, because Z"). It is not a content-quality story. It is a citation-extraction story.

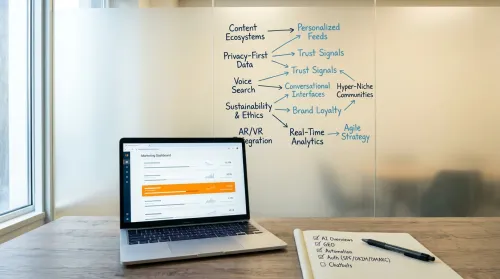

Challenge 2: Algorithm Volatility, Reframed for the AI Era

Most articles on this topic still warn you to "stay ahead of algorithm changes." That framing is from 2019 and it has aged into noise. The 2026 reality is that the algorithm is no longer the ranking system alone — it is the answer-generation system on top of it. Optimising for crawl, index, and rank gets you a result. Whether that result gets surfaced inside an AIO is a second, partially-correlated question, and very few teams are testing the second question with a holdout.

Concretely: when you ship a content update, you can A/B the indexed version against the prior one for organic traffic, and that experiment is well-understood. But you cannot easily measure whether your update changed your AIO citation rate — that comparison requires logging AIO appearances per query before and after, which most teams do not yet do. Tools like SE Ranking and Semrush have started reporting AIO presence per keyword; treat those data points as the new control variable in any organic-experiment design.

If you read nothing else from this section: the channel correlated with organic traffic recovery; whether your update caused the recovery is a separate question, and the test that would answer it requires you to log AIO citation per query weekly.

Challenge 3: The AI Adoption-Value Gap

The original challenge here used to be "harness data analytics." In 2026 that is no longer the bottleneck. The bottleneck is that everyone has the tools and almost no one is extracting value from them.

The numbers that matter: 87-88% of marketers now use generative AI in at least one workflow, up from 51% in 2024. But Supermetrics' 2026 Marketing Data Report finds that only 6% of organisations are AI "high performers" — the ones extracting measurable bottom-line value — and 52% of marketing teams do not own their own data strategy. MIT's review of more than 1,000 executives, cited in the same Supermetrics report, found that 95% of generative AI pilots fail to deliver measurable business value.

That 95% number is the one to internalise, because it tells you what is actually broken. The pilots are not failing because the models are bad. They are failing because there is no holdout. A team adopts an AI summarisation tool, reports a "30% productivity lift," and the lift turns out to be self-reported survey data with no control group — which is to say, no measurement at all. A clean read requires either a randomised assignment (half the team gets the tool, half does not, for a fixed period) or a synthetic control matched on pre-period output. Neither is hard to set up. Almost no one is doing it.

The cleaner reframe of "AI in marketing" for 2026 is not whether to adopt; it is how to govern. Who owns the prompt library? Who audits output for hallucination before it ships? Who runs the holdout when someone claims a productivity lift? If those three questions do not have named owners on your team, your AI programme is in the 95%, not the 6%.

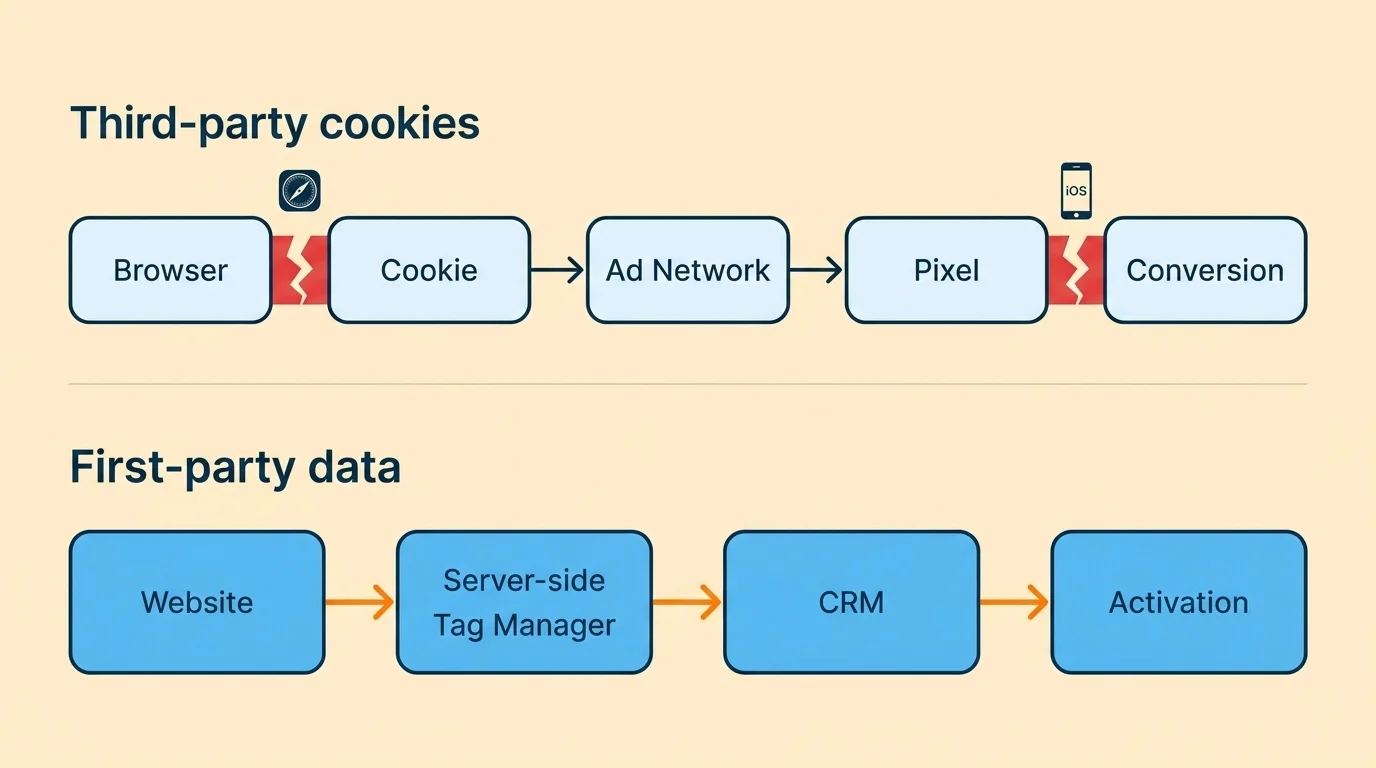

Challenge 4: First-Party Data and the Cookie Reversal

Here is a fact most 2024 articles on this topic now have wrong: Google paused its full Chrome third-party cookie deprecation in 2025 and pivoted to user-controlled privacy controls. Cookies on Chrome are not gone. But Safari and Firefox already block third-party cookies by default, and the strategic shift to first-party data has not reversed — only the timeline has. The brands that built first-party data programmes during the deprecation panic are now compounding that investment; the brands that waited for the deadline are still flat-footed.

Federated Digital Solutions reports that mature first-party data programmes deliver up to 8x ROAS and 25% lower CPA. Treat those numbers carefully — they are aggregates, the holdout construction is not specified, and the 8x figure almost certainly comes from the right tail of the distribution. But even discounted heavily, the directional read is consistent across vendors: brands with consented, identity-resolved first-party data outperform brands relying on third-party signals.

What you can actually do this quarter: stand up a server-side tag manager (Google Tag Manager server-side, Tealium, or Snowplow), gate at least one piece of high-value content behind an email opt-in with a clear value exchange, and pipe the captured events directly into your CRM rather than letting them die in a session-scoped cookie. The infrastructure decision matters more than the campaign tactic. The campaign tactic only compounds if the infrastructure is collecting clean data.

Challenge 5: Measuring ROI in a Post-Attribution World

This is HubSpot's #1 reported challenge for 2026 (33%) and it is also the one most likely to be answered with the wrong tool.

The mechanics, briefly: Apple's iOS 17 Link Tracking Protection strips tracking parameters (gclid, fbclid, utm_*) from URLs in Safari Private Browsing, Mail, and Messages — which is to say, on a meaningful share of conversions, the click that produced the conversion now arrives at your site with no attribution payload attached. Pixel-based ad-network attribution, which most dashboards still rely on, simply cannot see those conversions. The attributed conversion count under-reports the true conversion count, and the gap is not constant — it varies by audience composition.

Three approaches replace pixel-based attribution, and they are not interchangeable. Server-side event tracking (server-side GTM, Conversions API, Meta CAPI) captures the conversion event on your server and pushes it to ad platforms with first-party identifiers — useful for the deterministic floor of attribution. Marketing Mix Modelling (MMM), which is having a real comeback because pixel attribution broke, regresses revenue against channel spend at a daily or weekly grain — useful for incrementality at the channel level, but only honest if you also feed it adstock decay rates and a saturation curve. If your agency cannot produce those two parameters when asked, you are looking at a regression with marketing labels, not an MMM. Data-driven attribution in GA4 is a Shapley-value model on cross-channel paths — useful for credit allocation across known channels, but it does not solve the underlying problem of unobserved conversions.

The honest answer to "how do I track conversions in 2026" is: layer all three, accept that the absolute numbers will not reconcile, and report a directional incrementality estimate alongside the deterministic floor. Anyone selling you a single number with two-decimal precision is not selling you measurement; they are selling you confidence theatre.

Challenge 6: Social Ads That Actually Convert

The 2026 social-ad story is two layers. The platform layer — Meta, TikTok, LinkedIn — has tightened ad-cost benchmarks and rewarded creator-style native creative over polished brand asset. That part is well-covered elsewhere. The measurement layer is where most teams are leaving real money on the table.

Federated Digital reports that 71% of consumers say they would shop more if AR were available, and 30%+ of marketers are integrating AR/VR experiences into social campaigns. Meta and Snap continue to expand their AR ad formats; TikTok Effect House outputs are now plausible at production scale. But the measurement question — does an AR-enabled creative cause a conversion lift, or do the people who interact with it self-select into being closer to purchase anyway — is unanswered for most teams. A geographic holdout (run AR creative in two test markets, hold it out in two matched control markets, compare incremental revenue) takes a fortnight to design and answers the question cleanly. Most teams instead compare AR-engaged users against non-AR-engaged users in the same cohort, which measures self-selection, not the creative.

Personalisation is the other half of this story, and the headline number gets misused. Federated Digital's stat that 75% of consumers are more likely to buy from brands delivering personalised content is a stated-preference survey result, not an observed-behaviour result. Treat it as a directional signal, not a forecast.

Challenge 7: Content That Earns the Click in a Zero-Click World

Combine challenges 1 and 6 and you arrive at the content question. If 83% of AIO-triggered queries end without a click, what does content do?

It does two things. It earns AIO citation — the 1% of users who click through after reading the AIO are higher-intent than the average organic visitor by a wide margin, and structuring content for citation extraction (clear primary-claim sentences, FAQ schema, named-entity coverage) is the single highest-leverage content-strategy change a team can make this year. And it earns email capture — because if organic traffic is harder to monetise on first visit, the only durable response is to convert that visit into an owned-channel relationship.

The mistake to avoid: writing more content. The 95th-percentile content team in 2026 writes less and instruments more. Every piece is a small experiment with a measurable hypothesis: "this article will drive X% AIO citation rate in queries it targets, measured weekly." If you cannot state the hypothesis, the article is not finished.

Challenge 8: Conversion Rate Optimisation, Without the Theatre

CRO is the easiest discipline to fake and the hardest to do honestly. Most "lift" claims published by CRO vendors share a common defect: they compare exposed users against non-exposed users in the same time window without controlling for traffic-source mix, device mix, or seasonality. A clean A/B test requires randomised assignment at session start, a pre-registered primary metric, and a sample size large enough to clear the minimum detectable effect you actually care about — typically 5-10% relative lift on conversion rate, which requires meaningfully more traffic than most teams realise.

Tools matter here, briefly: VWO, Optimizely, and AB Tasty for full-funnel experimentation; Hotjar and Microsoft Clarity for qualitative session insight; Mixpanel or Amplitude for cohort analysis on the back end. The tool stack is not the bottleneck. The bottleneck is whether someone on the team has run a power calculation before the test starts. If not, you will run an underpowered test, observe a 4% lift, declare a win, and watch the metric drift back to baseline within two months.

The most expensive metric in most marketing dashboards is not the one that is wrong; it is the one that is right but meaningless. A conversion-rate lift number is meaningless without a holdout. Before you celebrate the next win, at least ask how the holdout was constructed.

What you can ship this Monday

Every section above lands on the same underlying problem: the marketing measurement stack most teams inherited was built for a deterministic web that no longer exists. AI Overviews, third-party cookie blocks, iOS link-tracking protection, and the AI adoption-value gap all break in the same direction — they widen the distance between what the dashboard reports and what actually happened.

You are not going to fix that this quarter. But you can pick one experiment to ship this Monday: log AIO citation rate for your top 25 ranking queries this week, baseline it, and re-measure it monthly. That single dashboard change — a column for "appeared in AIO" next to your existing ranking column — gives you the missing variable in every other measurement question you are trying to answer in 2026. It costs nothing. It takes an afternoon. And once it is running, you will know within a quarter which of these eight challenges is actually the one biting you.

Pick one. Ship it. Re-measure. The rest is just noise.

Frequently Asked Questions

Per HubSpot's 2026 survey of 1,500+ global marketers, measuring marketing ROI is the #1 reported challenge (cited by 33%), followed by keeping pace with platform changes (29.8%) and lead generation (29.6%). The deeper structural shift is AI Overviews — they now appear on 99.9% of informational searches and drive an 83% zero-click rate, gutting the organic-traffic playbook most teams still rely on.

Pick two challenges, not eight. For most SMBs the highest-leverage pair is (1) building first-party data via gated content, email, and loyalty programs — brands with mature first-party data programs see up to 8x ROAS — and (2) targeting long-tail informational queries with content structured for AI Overview citation. Both compound; both cost time more than money.

No. 87% of marketers already use generative AI daily, but only 6% of organizations extract real business value from it (Supermetrics 2026), and MIT found 95% of generative AI pilots fail to deliver measurable impact. AI replaces tasks, not strategy. The marketers who win in 2026 are the ones who learn to prompt, audit, and govern AI — not the ones who outsource judgment to it.

Layer three approaches: (1) Server-side tag management (e.g., server-side GTM) to capture first-party events directly, (2) Marketing Mix Modeling (MMM) to correlate spend with revenue at the channel level — making a comeback because pixel-based attribution broke after iOS 17 Link Tracking Protection, and (3) consented first-party data tied to your CRM. Treat this as infrastructure replacement, not a patch.